In 2026, GenAI isn’t “whether.” It’s where it reliably drives outcomes in B2B without creating risk, rework, or trust problems. This guide separates production-proven use cases from areas that are still early or overhyped, and it gives you a roadmap you can defend.

Quick takeaways

- Real wins: search & discovery, support, enablement content, and quoting assist (not autonomous quoting).

- Still early: full autonomy across complex, exception-heavy workflows.

- What “real” means: clear use case + governed data + guardrails + measurable outcomes.

- Roadmap principle: build reusable capabilities (retrieval, permissions, evals), not one-off demos.

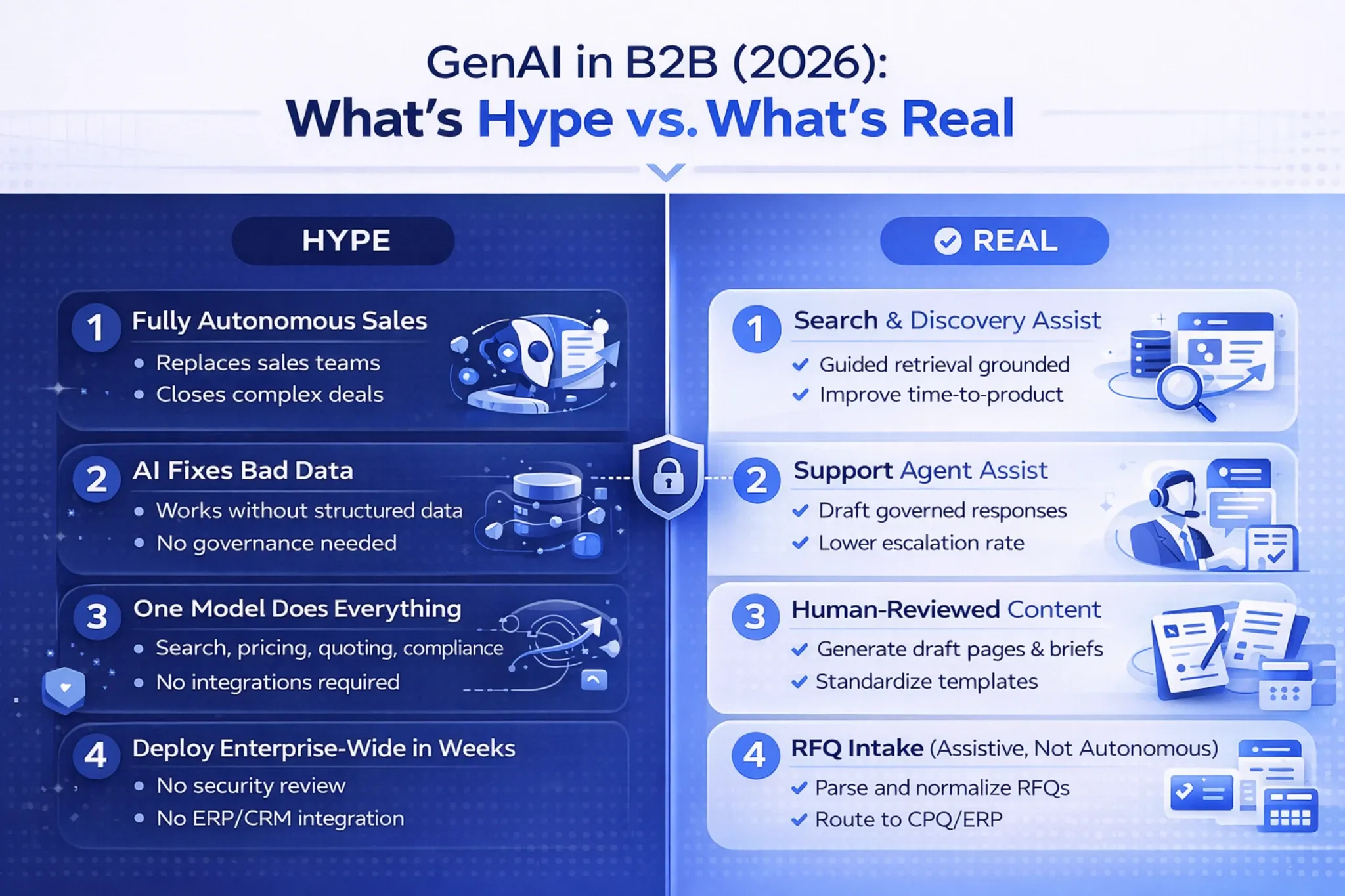

Hype vs. reality in B2B GenAI

B2B leaders are under real pressure to “do GenAI.” But plenty of what looks great in a demo breaks down in production when it meets account-based pricing, entitlements, negotiated terms, long buying cycles, and messy product data. In 2026, the gap between “pilot” and “operational” is still where most teams win or stall.

The most common failure mode is the pilot trap: experiments that never earn the right to scale. Gartner has warned that a meaningful share of generative AI projects get abandoned after proof-of-concept due to unclear value, cost, or risk controls. Treat that as a roadmap constraint: you’re not just proving capability, you’re proving durability. (Source: Gartner)

Why B2B is different (and why that matters for GenAI)

B2B commerce isn’t a single click. It’s a chain of constraints: permissions, compliance, contract pricing, ship-to rules, approvals, substitutions, and exception handling. If GenAI ignores those realities, it doesn’t just disappoint; it creates downstream rework and erodes trust.

That’s why the winning pattern in 2026 isn’t “a model that knows everything.” It’s a model that can retrieve the right information from governed sources, respect entitlements, and stay inside strict boundaries.

If you’re building or modernizing commerce experiences, this aligns naturally with portal and workflow investments in B2B eCommerce and B2B Commerce & Customer Portals.

The “pilot trap” and why 2026 is the year of operationalization

More organizations are experimenting with agent-like systems, but broad scaling is still uneven. McKinsey’s global AI survey has reported meaningful levels of experimentation and early scaling, which is consistent with what many B2B leaders see: progress, but not universal maturity. (Source: McKinsey)

Meanwhile, “agent washing” is real. Gartner has forecast that many agentic initiatives will be scrapped if cost, control, and value don’t hold up under scrutiny. (Reuters on Gartner) The takeaway for a realistic AI B2B strategy is straightforward: prioritize bounded wins, and scale autonomy only where governance is ready.

Where GenAI Is Delivering Today

GenAI is most reliable in B2B when it works as a copilot for humans or as a bounded assistant that retrieves, summarizes, drafts, and routes. The winning use cases are frequent, text-heavy, and constrained by clear rules. If you’re exploring delivery patterns for AI use cases in B2B, start here.

B2B search & product discovery

Search is one of the cleanest “what’s real” use cases because it’s high-volume and measurable. Buyers want to describe a need in their own language (“chemical-resistant gasket for X temperature range”) and get the right matches quickly.

GenAI helps translate messy language into structured intent, then uses retrieval to return results grounded in your catalog, specs, and documents.

In generative AI B2B commerce, the practical win isn’t “generating products.” It’s generating understanding: mapping buyer descriptions to SKU families, compatible accessories, and acceptable substitutions.

This works best when the assistant asks clarifying questions and explains results using governed sources, not guesswork.

Customer support & self-service

Support is another proven lane because it sits on top of knowledge and ticket history. GenAI can triage requests, summarize long threads, draft replies, and guide customers to the right troubleshooting steps. The key is grounding answers in approved knowledge and refusing to invent details.

A common rollout sequence is internal-first: support agent assist before customer-facing chat. That lets you validate quality with real cases, tighten guardrails, and build confidence with measurable metrics like time-to-first-response, average handle time, and escalation rate.

Content & enablement (human-reviewed)

B2B teams create repetitive, high-stakes content: product pages, spec summaries, comparisons, proposal drafts, and internal playbooks. GenAI delivers when it drafts and standardizes output while humans remain accountable for accuracy and compliance. The model should flag missing attributes rather than invent them.

This is also where a platform approach matters. If you’re investing in reusable patterns, tie enablement and knowledge workflows into a governed foundation like Platform AI Solutions so future use cases (support, search, quoting) don’t start from scratch.

Quoting & quote-to-cash assist

Quoting is high value and high risk. A model shouldn’t invent pricing or decide margins, but it can reduce friction in the earliest steps: parsing RFQs, normalizing line items, mapping customer language to internal SKUs, and routing requests into the right workflow. Think “assist and validate,” not “decide.”

In practice, the best implementations keep CPQ/ERP as the source of truth and use GenAI to accelerate intake, completeness checks, and narrative drafting. If you’re building toward agentic capabilities, this pairs well with a bounded approach to orchestration like Generative AI Agents Solutions rather than full autonomy.

Where It’s Still Early or Overhyped

The risk in 2026 isn’t that you won’t try GenAI. It’s that you’ll chase the wrong version of it: full autonomy in complex workflows without evaluation, identity controls, and governance. B2B exceptions are the rule, not the edge case.

| Area | What’s real in 2026 | What’s overhyped | Safer next step |

|---|---|---|---|

| Sales & RevOps | Prep, summarization, proposal drafts, next-best content | “Replace sales” and fully automated deal execution | Copilot + human approvals + logged recommendations |

| Quoting | RFQ intake + completeness checks + quote narrative drafts | Autonomous pricing and margin decisions | Bounded actions tied to CPQ/ERP rules |

| Support | Grounded answers, agent assist, routed workflows | Uncited answers that “sound right” but aren’t | Retrieval + citations + escalation paths |

| Agentic workflows | Supervised agents with limited privileges | Fully autonomous end-to-end orchestration | Start reversible, require approvals, expand slowly |

Full autonomy in complex workflows

Autonomous agents are appealing until they hit real B2B constraints: entitlements, compliance, pricing guardrails, approvals, and exceptions. In many organizations, the “workflow” is distributed across systems and tribal knowledge. If an agent can’t prove it is safe and correct, it won’t survive contact with audit, security, or finance.

If you want agents in 2026, treat them like interns with limited privileges. Start with supervised actions, narrow scopes, and reversible steps, then expand automation only as evaluation and monitoring mature.

For a deeper look at where agentic commerce can go (and what to do today), see Agentic Commerce in B2B eCommerce: Today vs. 2030.

“Replace sales” narratives vs. augment revenue teams

In B2B, sales isn’t just persuasion; it’s discovery, coordination, and risk reduction. GenAI can reduce admin drag and raise consistency, but it won’t replace stakeholder alignment, negotiation, and exception management. Design for augmentation and throughput, and you’ll get adoption instead of resistance.

One-model-to-rule-them-all thinking

The best production systems in 2026 are hybrid: retrieval + rules + orchestration + human review. Some tasks want creativity (drafting, summarization), while others demand determinism (eligibility, compliance, pricing logic). If you force one approach everywhere, you usually get an expensive assistant that’s impressive in demos and unreliable in production.

What “Real” Looks Like: The Criteria

A practical definition of realistic AI B2B is simple: it creates measurable outcomes, stays grounded in trusted data, and operates safely inside constraints. If your initiative can’t satisfy those conditions, it’s likely to stall; or ship and create costly rework.

Production-ready checklist

- Use case clarity: who uses it, when, and what “good” means.

- Constraints: allowed outputs, disallowed outputs, approval requirements.

- Data & entitlements: source of truth, freshness, permission enforcement.

- Guardrails: retrieval/grounding, refusal behavior, logging, monitoring.

- Evaluation: test sets that reflect your edge cases, not generic demos.

- Scoreboard: KPIs leadership already trusts (cycle time, deflection, conversion, rework).

Clear use case and constraints

Start with a workflow, not a model. Define who it’s for, the moment it appears in the journey, and what outcomes it should change. Then define boundaries: what the assistant can do, what tools it can call, and what requires a human approval.

Data readiness (source of truth, permissions, freshness)

B2B success is gated by data quality and access. If product attributes are incomplete, documentation is outdated, or entitlements are inconsistent, GenAI will amplify the mess. The goal isn’t perfect data on day one; it’s a clear source of truth and a plan to close the highest-impact gaps.

Guardrails (grounding, human-in-the-loop, evaluation)

Guardrails are the difference between “helpful” and “safe.” Retrieval and grounding reduce hallucinations by anchoring output in governed content. Human-in-the-loop isn’t a crutch; it’s a scaling strategy that lets you automate the lowest-risk steps first, then expand as confidence grows.

If you want a structured way to think about agent boundaries and protocols in commerce, see Agentic Commerce Protocol (ACP) and Agentic Commerce in B2B: UCP vs ACP Explained. These concepts help teams separate “assistive” from “autonomous” work in a way governance can support.

Measurable outcomes (and a clear scoreboard)

If you can’t measure success, you can’t scale responsibly. Every use case needs a scoreboard tied to business outcomes. For search: conversion, time-to-product, and reduced zero-results queries; for support: deflection, handle time, escalation rate, and satisfaction; for quote-to-cash: time-to-quote, rework rate, and margin integrity.

Want a fast reality check on your candidate use cases, data readiness, and guardrails? We can help you prioritize what ships in 2026.

How to Think About Your 2026 Roadmap

A roadmap that works in 2026 does two things. It prioritizes initiatives that can reach production, and it builds capabilities that compound over time. You want early wins that prove value and foundational work (data, governance, evaluation) that makes the next use case faster.

Prioritize by value × feasibility × risk

Use a simple triage model: value (does it move a KPI?), feasibility (can we ship in 8–12 weeks?), and risk (what happens if it’s wrong?). The best first pilots are typically high-frequency and low-risk: internal enablement, support agent assist, or search augmentation.

| Candidate use case | Value | Feasibility | Risk | Pilot first step |

|---|---|---|---|---|

| Support agent assist | High | High | Low–Med | Draft responses + case summaries (internal only) |

| B2B search assistant | High | Med–High | Med | Conversational refinement + grounded explanations |

| Sales enablement copilot | Med | High | Low | Account briefs + proposal drafts (human-reviewed) |

| RFQ intake & normalization | High | Med | Med–High | Extract + map items; no pricing decisions |

A practical pilot sequence: crawl-walk-run

A pragmatic sequence for GenAI B2B 2026 planning looks like this. Crawl: internal copilots for support or sales enablement (fast feedback, low risk). Walk: customer-facing search and self-service grounded in governed content. Run: controlled workflow assistance like RFQ intake and quote drafting with approvals and monitoring.

Operating model: ownership, governance, and enablement

GenAI fails when it’s “everyone’s project” and no one owns the workflow. Assign a business owner for each use case, define approval rights, and make logging and evaluation non-negotiable. This is where a consistent delivery approach matters more than the newest model.

Build vs. buy in 2026: where vendors help vs. custom matters

Vendor copilots can accelerate commodity capabilities (basic chat, summarization, productivity). But B2B differentiation often lives in your data, rules, and processes; especially in commerce, quoting, and entitlements. A common winning strategy is hybrid: buy the basics, build the differentiators with governed retrieval and integrations.

If your commerce platform is already straining under complexity, consider aligning AI priorities with platform modernization. Two relevant reads: B2B eCommerce Best Practices in 2025 and 4 Signs Your B2B eCommerce Platform Is Holding You Back.

Conclusion

In 2026, real GenAI for B2B isn’t about chasing autonomy or replacing teams. It’s about improving the workflows that matter; search, support, content enablement, and quote-to-cash assistance; with grounding, guardrails, and measurable outcomes. The hype fades quickly when leaders ask, “What changed in the business?”

Start with use cases that can ship, prove value, and build reusable assets. Then expand into more complex automation only when your data, governance, and evaluation maturity can support it. If you want help prioritizing what’s real and building a roadmap that survives production, we’re here.

We’ll help you shortlist the highest-ROI use cases, validate data readiness, define guardrails, and build a crawl-walk-run plan that your stakeholders can approve.