🧠 Agentic AI architecture is not “just chat.” It’s a sophisticated system design, providing clear **what is artificial intelligence with examples** of agents pursuing goals over time: observing context, deciding what to do next, taking actions through tools, and evaluating outcomes.

✅ This guide is a durable reference you can return to when designing, buying, or governing agentic systems in production.

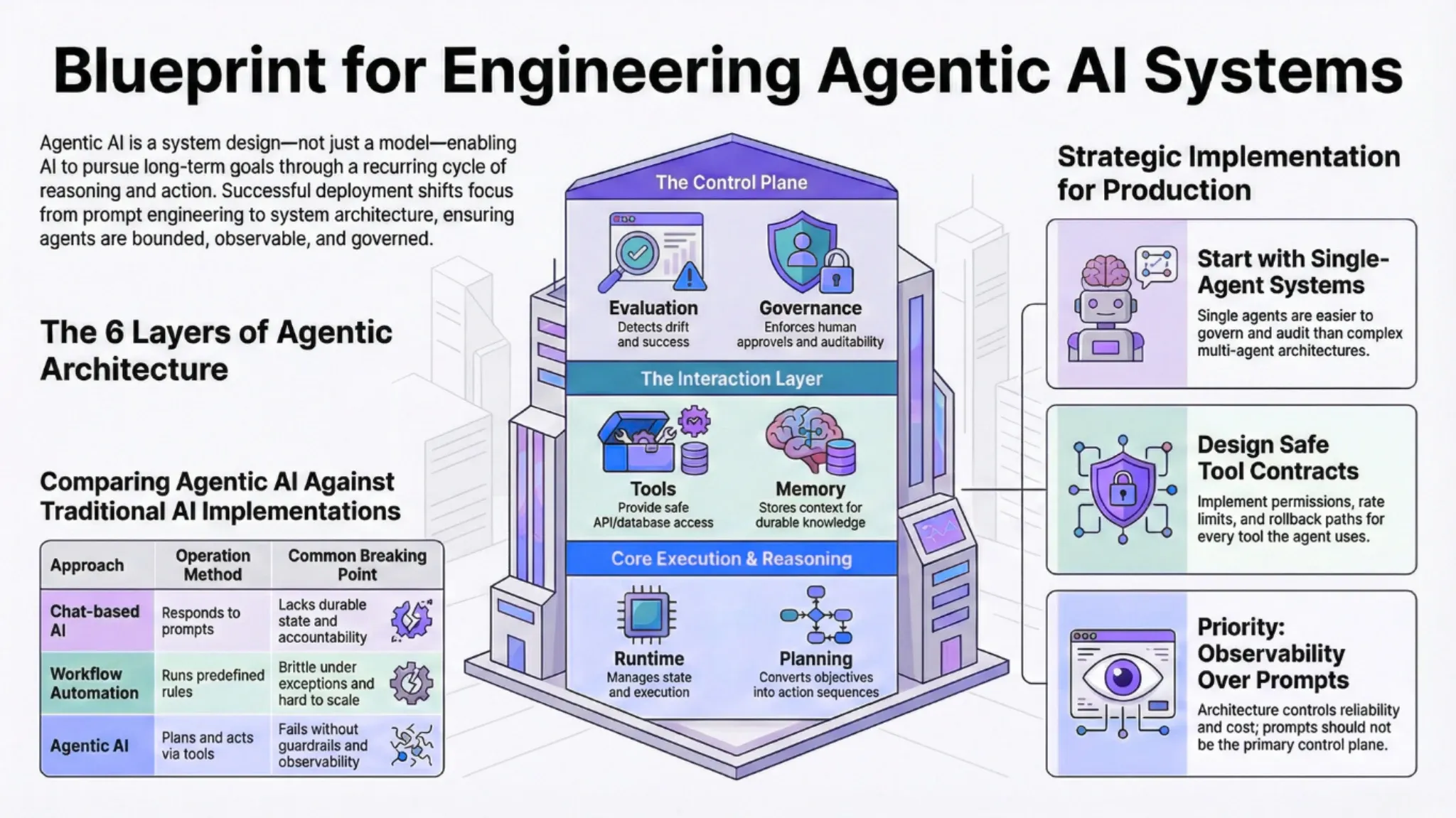

Agentic AI architecture is the system design that enables AI agents to pursue goals over time by observing context, deciding what to do next, taking actions through tools, and evaluating outcomes. This blog is written as a durable reference you can return to when designing, buying, or governing agentic systems in production, offering insights relevant to any **AI architect** or those seeking an **ai architect job description**.

Introduction to agentic AI architecture

Many teams' first encounter with agents through demos that look like chat. Understanding **how ai works** fundamentally reveals that an agent is a system with state, tools, policies, and feedback loops, not just a model responding to prompts. That is why agentic AI succeeds or fails based on system design choices, not prompt quality alone.

If you are already exploring Autonomous AI Agents or broader Agentic and Autonomous AI services, this reference blog will help you separate architecture fundamentals from tooling details. For additional business context on where agents fit operationally, see how AI agents are applied to business automation.

What is Agentic AI Architecture?

For those wondering **how does artificial intelligence work**, agentic AI architecture defines how an agent moves through recurring decision cycles: perceiving context, selecting an action, executing it through a tool, and learning from the result. A well-designed architecture makes those cycles reliable, bounded, and observable. A weak architecture produces unpredictable behavior, rising costs, and unclear accountability.

It also clarifies where autonomy starts and ends. In most enterprises, the goal is not maximum autonomy; it is safe autonomy that improves outcomes without expanding risk. That means your architecture must encode constraints as first-class components, not afterthoughts.

💬 Chat-based AI

How it operates: Responds to prompts and messages

⚠️ Where it breaks: No durable state, weak action execution, limited accountability

🧩 Workflow automation

How it operates: Runs predefined steps and rules

⚠️ Where it breaks: Brittle under exceptions, costly to maintain at scale

🤖 Agentic AI

How it operates: Plans, acts via tools, evaluates outcomes over time

🚩 Where it breaks: Fails without guardrails, observability, and safe tool design

Core concepts behind agentic AI systems

To address the question, **is ai a program**, it's more accurate to say that agency in AI is an engineering behavior, not a philosophical claim. An agent has an objective, can choose among actions, and can persist across time and context. That persistence is what turns a single model call into a system that can complete multi-step work.

The key design tension is probabilistic reasoning inside deterministic business constraints. Your architecture must translate uncertain model outputs into bounded actions through policies, permissions, and verification steps. This is where many prototypes stall when they move from demos to production.

The fundamental building blocks of agentic AI architecture

Most agentic systems can be understood as five layers, often depicted in an **AI agent architecture diagram**: runtime, planning, tools, memory, and evaluation. A sixth layer, governance, controls risk across the others. The goal is not complexity; it is clarity about what each layer owns and how failures are handled.

⏱️ Agent runtime: manages execution cycles, state, and timeouts.

🧠 Planning and reasoning: chooses next actions based on goals and constraints.

🛠️ Tool layer: safe, permissioned access to APIs and workflows, often built on AI process automation patterns.

🧠 Memory layer: short-term context plus long-term retrieval for durable knowledge.

📏 Evaluation and feedback: detects success, failure, and drift over time.

🛡️ Governance: enforces access boundaries, human approvals, and auditability.

How agentic AI systems reason and plan

Planning is the mechanism that converts an objective into a sequence of actions. While it might seem as if **computers with ai use human intelligence to make decisions**, in practice, planning is a loop: propose steps, validate constraints, execute one step, update state, and repeat. Architecture determines how tightly you constrain planning, how you validate outputs, and how you recover from failure.

⚠️ Production reality: Short-horizon planning is safer and easier to audit. That’s why most production agents execute one action at a time with checkpoints.

Longer-horizon planning can work, but it usually requires stronger evaluation, more deterministic tool contracts, and clearer rollback behavior. If you want a practical illustration of where this matters in commerce, see how agentic workflows are applied in B2B ecommerce environments.

Memory and context in agentic AI architecture

Memory is what turns a one-off interaction into a system that improves over time, illustrating **how does AI learn** and adapt. Short-term memory holds the immediate working context for a decision cycle. Long-term memory stores facts, preferences, histories, and prior outcomes that should influence future actions.

⚠️ The real architectural question: It’s not whether to store more data; it’s whether you can retrieve the right data at the right time.

Retrieval-augmented approaches help agents ground decisions in enterprise knowledge, but they also introduce risks like outdated information and contamination. If your team is formalizing this layer, AI infrastructure capabilities such as vector databases and semantic search are often foundational.

When teams need language interfaces on top of retrieval and memory, they often pair that infrastructure with LLM-powered AI solutions that support contextual search and grounded responses.

Tooling and environment interaction

Tools are how agents act. A tool can be an API call, a database query, a workflow trigger, or a transaction in a business system. Your architecture should define tool contracts clearly, including inputs, outputs, error handling, timeouts, and side effects.

✅ Deployable agents have safe tools: idempotent actions, rate limits, approvals for high-risk steps, and rollback paths where possible.

If you want a practical business lens on where these tool patterns show up first, business automation use cases for AI agents is a helpful complement.

Orchestration and control layers

Orchestration is how you control multiple moving parts within agentic systems, often leveraging dedicated **AI orchestration platforms**. It includes routing tasks, scheduling execution, enforcing cost and latency budgets, and coordinating handoffs between tools and humans. Without effective orchestration, agentic systems become difficult to predict and expensive to run.

Many organizations implement orchestration as a controller that applies policies, rather than an additional autonomous agent. This keeps governance explicit and helps teams debug behavior quickly. In practice, orchestration often evolves from early prototypes into a formal operating layer as the system scales.

Single-agent and multi-agent architectures

Single-agent architectures are usually the best starting point. They are simpler to observe, cheaper to operate, and easier to govern. Multi-agent architectures can be powerful, but they introduce coordination overhead and failure modes that are hard to diagnose.

🧍 Single-agent

- Best for bounded workflows and clear objectives

- Simpler permissions and audit trails

- Lower overhead and fewer coordination cycles

- Works with basic monitoring and evaluation

🧑🤝🧑 Multi-agent

- Best when work can be decomposed into specialized roles

- Harder to contain blast radius and explain decisions 🚩

- Higher overhead from coordination and message passing

- Requires strong observability, testing, and policy enforcement

If you want real operational examples of multi-step workflows and where handoffs break, the blog on automating sales and ordering with agentic AI is a useful complement. The most common pattern is to start with a single constrained agent and add specialization only after measurement is stable.

Observability and evaluation in agentic systems

Observability answers a simple question: why did the agent do that. At minimum, you need structured logs for tool calls, decision traces, and outcomes. Evaluation turns those traces into signals you can use to improve reliability and cost.

- Completion rate

- Exception rate

- Time-to-resolution

- Cost per successful outcome

Over time, evaluation should include regression tests that catch drift, policy violations, and degraded tool performance. In enterprise settings, evaluation is also part of audit readiness.

Governance and safety considerations

Governance is what makes agentic AI architecture enterprise-ready. It defines access boundaries, approval thresholds, audit trails, and accountability. Without governance, autonomy becomes risk rather than leverage.

🚨 Red flag: If your agent can “do things” in production but you can’t explain or replay why it did them, you don’t have an architecture—you have a liability.

- Access control: separate what the agent can read from what it can change.

- Human oversight: approvals at decision boundaries, not every step.

- Blast radius containment: rate limits, transaction caps, and environment isolation.

- Auditability: durable logs for actions, inputs, and policy checks.

Implementing agentic AI architecture in practice

For those wondering **how to develop an AI** agent effectively, a practical implementation sequence, often guided by proven **agentic design patterns**, is to start with one constrained workflow, one agent, and a small toolset. Focus first on tool contracts, policy enforcement, and measurement, then expand scope only after reliability is stable. This is also where many teams benefit from aligning agent design with a broader generative AI agent solution approach that prioritizes integration and operating model readiness.

🎯 Practical checkpoint: If the business can’t define success in measurable terms, autonomy should not increase. If outcomes can be measured, autonomy can expand safely with clear guardrails ✅

Common architectural mistakes and misconceptions

🚩 Mistake #1: Treating prompts as system logic

Prompts help behavior, but architecture controls reliability, safety, and cost. When prompts become the control plane, failures become harder to reproduce and fix.

⚠️ Mistake #2: Going multi-agent before measurement is stable

Coordination introduces emergent behavior that can look like intelligence during demos and chaos in production. Start constrained single-agent, add specialization, then multi-agent only when justified.

How to evaluate whether agentic AI architecture is right for your use case

Agentic architecture is most valuable when work requires multi-step reasoning, tool use, and adaptation to variability. If your process is stable and rule-based, classic automation may be simpler and safer. If your process includes frequent exceptions and complex decision paths, agents can reduce cycle time and manual handling when governed well.

✅ Simple test: Can the work be expressed as a set of safe actions with clear permissions and measurable outcomes? If you can define safe actions, you can pilot a constrained agent and learn quickly.

If you cannot define safe actions, you cannot safely deploy an agent. If you can define them, start small, measure reliability, and expand scope deliberately.

Conclusion and architectural checklist

Agentic AI architecture is a system discipline. The model matters, but the architecture determines whether the system can act safely, recover from failure, and produce durable value. Teams that invest early in tool contracts, observability, and governance ship faster and avoid expensive rework.

🧾 Checklist (paste into your build plan)

- Define the agent objective and success metrics in business terms.

- Design safe tools with permissions, timeouts, and rollback paths.

- Add memory only when you can measure retrieval quality and drift.

- Implement observability so decisions and actions are explainable.

- Apply governance to constrain blast radius and enable audits.

If you are mapping this to real workflows, start with one narrow process and expand as reliability improves. If you want to sanity-check your tool boundaries, memory needs, and operating model, start with a quick discovery around Autonomous AI Agents and align the build plan to the controls your teams can actually run.